|

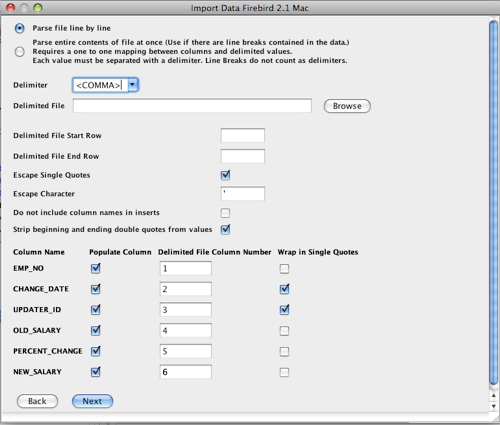

import ' command takes two arguments which are the source from which data is to be read and the name of the.

import ' command to import CSV (comma separated value) or similarly delimited data into an SQLite table. separator ' ooga booga 'Īs for the rest, SQLite is telling you the problem: the separator isn't matching somehow. Importing files as CSV or other formats Use the '. If the CSV file must be imported as part of a python program, then for simplicity and efficiency, you could use os.system along the lines suggested by the following: import os cmd '''sqlite3 database.db <<< '.import input.csv mytable' ''' rc os.system (cmd) print (rc) The point is that by specifying the filename of the database, the data. separator also affects SQLite's output, not just its. import /tmp/deleteme.csv users' I don't get errors but I also don't end up with any data in the users table. lab-1:/etc/scripts sqlite3 test.db '.mode csv. mode selection, telling it to use semicolons as separators, and that the difference is due to restarting sqlite3, causing the separator and other modes to be reset to their defaults. lab-1:/etc/scripts sqlite3 test.db '.mode csv. I expect this is just a reflection of your earlier. Once again, one thing is happening on your local computer, but you then go to show us something different. and then you go on to show semicolons and double-quotes instead. Several other programs expecting CSV/TSV style input also want that header. Unless you depend on the column affinities being set up in a certain way, it's simpler to just add a header row to the input file. This command accepts a file name, and a table name. You can pre-define the schema, as you discovered, to get around this. You can import data from a CSV file into an SQLite database. It means the first row of your CSV file isn't a header, listing the names of the columns. I assume this isn't a literal copy-and-paste from a SQLite command session, else later parts of your explanation wouldn't be working, which then leads me to ask, why are you posting commands here that differ from what you're actually typing? Why make us second-guess your description in order to make any sense of it? Why are you putting a space between the "c:" bit and the rest of the path? That's two paths, the first meaning "the current working directory on the C drive". Pandas makes it easy to load this CSV data into a sqlite table: import pandas as pd load the data into a Pandas DataFrame users pd.readcsv('users.csv') write the data to a sqlite table users.tosql('users', conn, if. The file name is the file from which the data is read, the table name is the table that the data will be imported into. Suppose you have the following users.csv file: userid,username 1,pokerkid 2,crazyken. import c: /users/inspiron/desktop/people2012.csv You can import data from a CSV file into an SQLite database. Sqlite> select * from people2012 limit 10 However, when specifying the base, there is no value in the columns. So, as the procedure didn't work, I tried to create the table first (with the columns separated by a comma):Ĭ: /users/inspiron/desktop/pessoas2012.csv:11: expected 120 columns but found 1 - filling the rest with NULL import c: /users/inspiron/desktop/people2012.csv people2012ĬREATE TABLE people2012 (.) failed: duplicate column name:

I am using the following command to import this database: I want it to not treat it as a data row, but use it to determine which column the data should be added to.I have a base with 81,372,577 observations and whose columns are separated by semicolons. import table1.csv table1 SQLite3 will just treat the column names as a data row.

I have a situation where I have CSV files with column names in the first row, which perfectly match the tables in my SQLite3 db, except they are in a different order.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed